Images showing large-scale anti-government protests in a European capital were shared in 2023 as photographic evidence of real civil unrest. The images were generated by AI. This case illustrates a shift in the misinformation landscape: fabricated political imagery no longer requires sophisticated video editing, stolen footage, or expert manipulation. Off-the-shelf AI image generators can now produce convincing crowd scenes in minutes, with no technical expertise required.

The Claim

In mid-2023, images depicting what appeared to be large crowds of protesters filling the streets of a European capital began circulating on Twitter/X, Telegram, and Facebook. Accompanying captions presented the images as photographic documentation of anti-government demonstrations, implying that civil unrest was being suppressed or underreported by mainstream media. The images showed photorealistic crowd scenes, flags, and signage consistent with political protest aesthetics. No specific event, date, or verifiable location was attached to the images. This was not an accident: the vagueness made the images harder to disprove through event-specific fact-checking.

How It Spread

The images circulated primarily in communities already skeptical of European governments and mainstream media coverage of political events. The Atlantic Council’s Digital Forensic Research Lab (DFRLab) documented multiple instances of AI-generated crowd and protest images being used in political disinformation campaigns across Europe during 2023, with inauthentic networks deploying synthetic visuals to support pre-existing narratives about political unrest. The images spread through a sharing pattern that bypassed standard reverse-image-search verification: because the images were newly generated and had no prior online existence, they returned no results in Google Images or TinEye, creating a false impression of original documentation. The absence of a search result was interpreted by sharers as confirmation of authenticity rather than as a flag for verification.

The Truth

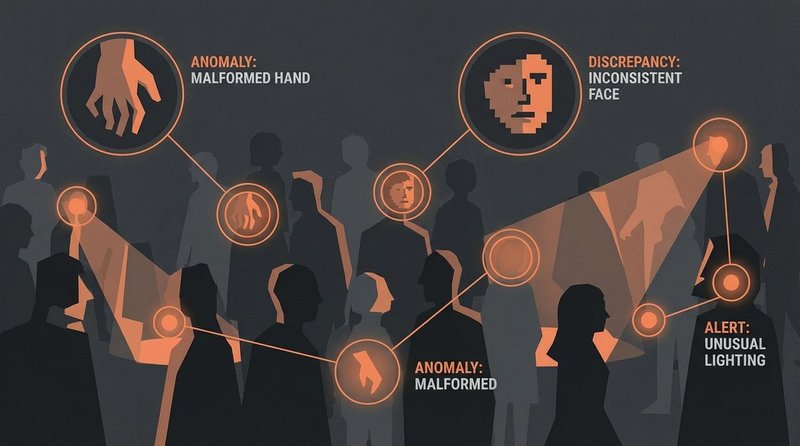

The images were generated using text-to-image AI systems — likely Midjourney, Stable Diffusion, or a comparable platform — and do not depict real events. Detection was accomplished through a combination of forensic image analysis and visual artifact identification. Hany Farid, professor at UC Berkeley and a leading researcher in digital image forensics, has documented the characteristic signatures of AI-generated crowd images: unnaturally uniform lighting across large crowds, faces in mid-distance that lack individual coherence, hands and fingers that show synthesis artifacts, and architectural backgrounds with subtle geometric inconsistencies. The Content Authenticity Initiative (CAI), a coalition of media organizations and technology companies including Adobe, BBC, and The New York Times, was established precisely to address this challenge by developing open standards for provenance metadata — allowing images to carry verifiable records of their origin and editing history. Images generated by AI and distributed without provenance metadata are structurally unverifiable by conventional means, which is why provenance standards matter.

The DFRLab analysis of similar 2023 campaigns also identified a consistent operational pattern: AI-generated images were paired with real protest footage from unrelated events in other countries, combining authentic-looking but fabricated imagery with genuine documentation to increase overall credibility of the composite narrative.

How to Spot It

- Examine hands and peripheral faces: Current AI image generators handle primary subjects reasonably well but struggle with hands (extra or malformed fingers are common) and with crowd faces at medium distance, which often lack individual detail and coherence.

- No reverse-image-search result is not proof of authenticity: Newly generated AI images return no search results because they have never existed before. The absence of prior online history should prompt more scrutiny, not less.

- Check for provenance metadata: Tools like Adobe’s Content Credentials viewer or the CAI’s verify.contentauthenticity.org can check whether an image carries verified origin information. Legitimate news photography increasingly carries this data.

- Vague location and date claims: Images presented as evidence of real events but without specific locations, dates, or identifiable landmarks are designed to resist verification. Genuine protest documentation almost always includes contextual details that can be cross-checked.

Classification

This is a synthetic media fabrication case representing the current frontier of visual misinformation. Unlike deepfake videos, which require a real source person and significant processing, AI image generation requires only a text prompt and seconds of computation. The barrier to entry for visual political disinformation has effectively disappeared. The operational pattern documented here — vague geographic framing, absence of verifiable metadata, deployment within ideologically receptive communities — represents what DFRLab researchers describe as a new baseline for AI-assisted influence operations. The Content Authenticity Initiative’s provenance standards are the most credible technical response, but adoption remains incomplete across platforms and publishers.