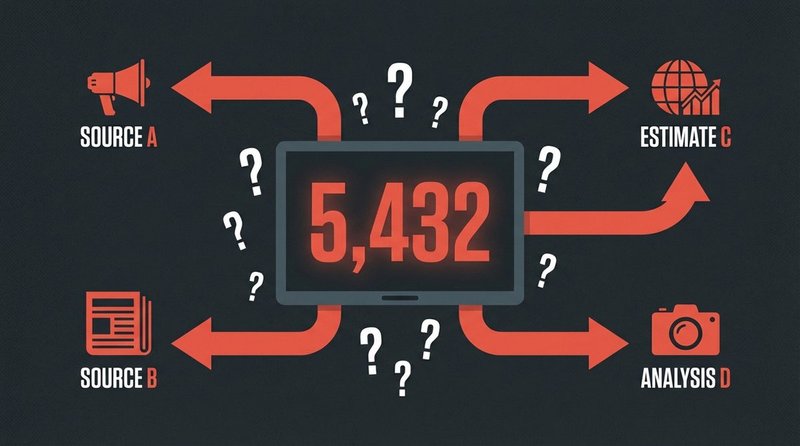

Numbers feel authoritative. In conflict reporting, casualty figures are among the most powerful rhetorical tools available — and among the most frequently manipulated. This case documents how a single set of contested figures was presented as verified fact, stripped of the methodological caveats that made them estimates rather than counts.

The Claim

Following the escalation of the Gaza conflict in October 2023, casualty statistics from the Hamas-run Gaza Ministry of Health were cited extensively — including by major news agencies — without consistent attribution or acknowledgment that the figures came from a party to the conflict. The numbers were presented in many contexts as independently verified totals. They were not: they were estimates from a single administrative source operating under active conflict conditions, with no independent cross-verification possible at the time of publication.

How It Spread

From October 8, 2023 onward, the figures circulated across social media in two contradictory ways. Pro-Palestinian accounts cited them as definitive proof of disproportionate harm; pro-Israeli accounts dismissed them entirely as fabricated propaganda. Both positions bypassed the actual methodological question: what do we know about how these figures were compiled, and what margin of error should be applied?

The Armed Conflict Location and Event Data Project (ACLED) — one of the most respected independent conflict data organizations — published methodological notes explaining that casualty figures in active urban warfare zones are structurally uncertain regardless of source. No party to a conflict produces neutral data. This context was absent from most viral presentations of the numbers.

The Truth

The casualty figures were estimates based on available reporting from medical facilities, morgues, and field documentation — a standard methodology for conflict casualty tracking, but one that carries inherent limitations. The Committee to Protect Journalists (CPJ) and independent researchers noted that figures from all parties in the conflict should be treated as provisional until post-conflict forensic verification is possible.

Presenting these figures as settled, independently verified counts — without attribution or methodological disclosure — crosses from reporting into misleading framing. The numbers may be broadly accurate, broadly inflated, or broadly undercounted. Without transparent methodology, the claim of certainty is itself misinformation.

How to Spot It

- Ask who compiled the figure and how: a government ministry, an NGO, a hospital, an independent monitor? Each has different access, incentives, and limitations.

- Look for confidence intervals or caveats. Legitimate conflict data always includes uncertainty ranges. A single round number presented without qualification is a red flag.

- Check whether the source has access to the area being counted. Casualty figures from active conflict zones are estimates by definition — no one counts bodies in a live combat zone with precision.

- Cross-reference with ACLED, the UN OCHA, and Airwaves conflict monitors, which apply explicit methodology documentation to their figures.

Classification

This case is classified as missing source context / partisan framing. The underlying data existed and may reflect real events. The misinformation is structural: presenting estimates as verified counts, and partisan sources as neutral ones. This pattern appears across virtually every modern armed conflict and is not unique to any political position. It is a systematic feature of how conflict statistics enter public discourse — making methodological literacy a core skill for any news consumer covering war.