The number of deepfakes reported globally rose from roughly 500,000 in 2023 to an estimated 8 million in 2025 — a 1,500% increase in two years. That figure is not a prediction. It is a documented escalation, visible in case databases, platform transparency reports, and the forensic archives of fact-checking organizations. This article covers the major case categories, the detection methods that work, and the regulatory framework now taking shape.

From 2024 to 2025: What Changed

The AI-generated misinformation documented in our 2024 year-in-review was, in retrospect, a transition period. By 2025, three things had changed: generation quality improved enough that casual visual inspection no longer reliably detected fakes; the distribution infrastructure became more sophisticated, with synthetic content seeded into legitimate-seeming news aggregators; and the targets shifted from primarily political figures to a broader range including journalists, activists, business executives, and private individuals.

Four categories of AI-generated fake news dominated 2025: political deepfake videos, AI-generated protest and conflict imagery, voice clones used in disinformation campaigns, and fully synthetic news portals. Each is documented below with verified cases. For specific case-level detail, our database includes entries for the Zelensky surrender deepfake and AI-generated protest photos that provide methodological context for the patterns described here.

Category 1: Deepfake Videos of Political Figures

Deepfake videos targeting political figures in 2025 were more numerous, more geographically distributed, and harder to detect than in 2024. The most significant documented cases shared a common structure: a recognizable political figure, a fabricated statement aligned with an existing conspiracy narrative, and a distribution strategy that exploited platform recommendation algorithms before fact-checkers could respond.

Canadian Election Deepfakes

In the run-up to Canada’s 2025 federal election, deepfake clips mimicking CBC and CTV news bulletins circulated on social media. One clip purported to show a CBC news segment quoting Liberal leader Mark Carney making statements he had never made. The clips were identified by the MediaSmarts organization and CBC’s own verification team within hours, but had accumulated tens of thousands of shares before removal. The use of news broadcast formats — including on-screen chyrons and anchor-desk framing — was deliberate: borrowed institutional credibility is a documented pattern in political deepfake campaigns.

Southeast Asian Investment Fraud Deepfakes

In mid-2025, Royal Malaysia Police dismantled a network using AI-generated videos of politicians and business figures — including Prime Minister Anwar Ibrahim, Elon Musk, and Donald Trump — to promote fraudulent investment schemes. The videos were distributed via WhatsApp and Telegram channels. Face-swap quality was high enough that many recipients could not identify the manipulation without technical tools. This case illustrates a shift that researchers at MIT Media Lab’s Detect DeepFakes project had documented in their research: deepfakes designed to deceive in a financial or fraud context are often higher quality than those designed for political messaging, because the financial payoff justifies greater production investment.

The Rubio Voice Deepfake

A voice-cloned audio clip falsely attributed to US Secretary of State Marco Rubio circulated in early 2025, depicting him making statements inconsistent with official US foreign policy positions. The clip was flagged by Pindrop’s voice authentication system and subsequently analyzed by several independent audio forensics teams. The detection relied on spectral analysis — voice clones consistently produce artifacts in specific frequency ranges that human ears do not register but spectral visualization tools surface immediately. This case prompted renewed calls for voice authentication standards in political communications, including from the FCC, which was already developing guidance following the 2024 Biden robocall ruling.

Category 2: AI-Generated Protest and Conflict Images

AI-generated images of protests and conflict events represent a distinct threat model from deepfake videos: they are cheaper to produce, harder to trace, and exploit the breaking-news moment when verification pressure is highest and audience skepticism is lowest.

The UK Riots Pattern (Established 2024, Continued 2025)

During the August 2024 UK riots, AI-generated images spread within hours of real incidents — including a fabricated photograph falsely showing British police officers prostrating before a Muslim group. The image was identified as AI-generated by forensic analysis within 24 hours, but had already been shared hundreds of thousands of times on X. The 2025 iteration of this pattern — documented across multiple protest contexts in Europe and North America — showed improved generation quality and faster initial seeding, suggesting more coordinated distribution rather than organic sharing. Full case documentation is available in our AI-generated protest photos database entry.

The “No Kings” Protest Images

In June 2025, AI-generated images depicting celebrities including Taylor Swift and Travis Kelce at US political protests circulated on Facebook and X. PolitiFact’s verification confirmed neither person was present at the events depicted. The images used consistent visual artifacts — anatomically distorted hands, implausible crowd density gradients, synthetic-looking sky textures — that would be apparent under close inspection, but circulated primarily as compressed thumbnails where such details are invisible. This case is documented in our database as an example of the celebrity-proximity manipulation pattern: attaching a recognizable, trusted public figure to a political message via synthetic imagery to borrow perceived legitimacy.

Disaster Coverage Manipulation

Every significant natural disaster in 2025 was accompanied, within hours, by AI-generated images depicting fabricated or exaggerated destruction. AFP Fact Check and BBC Verify documented this pattern consistently across flooding events in South Asia, wildfires in North America, and earthquakes in Central Asia. The production lag for AI image generation — now measured in seconds — means synthetic disaster images reach social media before official photography from the scene. Platforms that had implemented AI-content labeling caught some instances; those that had not caught very few.

Category 3: Voice Clones in Disinformation Campaigns

Voice cloning is the fastest-scaling AI deception vector in 2025. Commercially available tools — including ElevenLabs, OpenAI’s Voice Engine, and several open-source alternatives — can produce a convincing voice clone from as few as three seconds of source audio. The rate of voice-based deepfake attacks increased by over 1,300% between 2023 and 2025, from roughly one incident per month to more than seven per day, according to the 2025 Voice Intelligence and Security Report from Pindrop.

Robocall Campaigns

Following the 2024 Biden robocall precedent, voice-cloned robocall campaigns targeting voters appeared in at least six countries during 2025 elections or referenda. The FCC’s January 2024 ruling classifying AI voice robocalls as illegal under the TCPA has limited — but not eliminated — their use in the US. International cases faced inconsistent legal frameworks: several EU member states had no specific legislation covering AI-generated voice content prior to the EU AI Act’s transparency provisions becoming operational in 2026.

Phone Scams at Scale

Voice cloning’s most damaging 2025 impact was not political but financial. The FBI’s Internet Crime Complaint Center (IC3) documented a sharp increase in “grandparent scam” variants using cloned voices of family members to request emergency wire transfers. A survey published in 2026 estimated that 1 in 4 Americans had encountered a deepfake voice interaction in the previous year — though not all were scams. The financial losses from voice clone fraud in 2025 were estimated at over $2.5 billion in the US alone.

Category 4: Fully Synthetic News Portals

The most structurally concerning development of 2025 was not a specific fake video or cloned voice, but the proliferation of entirely AI-generated news websites — portals with no human editorial staff, no real journalists, and no accountability structures, producing a continuous feed of plausible-looking content designed to be indexed by search engines and recommended by social platforms.

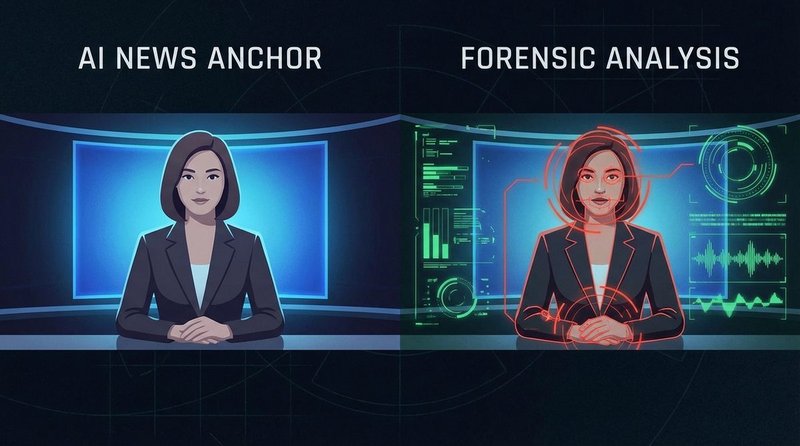

Meta’s 2024 Adversarial Threat Reports had already documented state-linked synthetic news portals using AI newsreader avatars. By 2025, this technique was no longer limited to state actors. NewsGuard’s monitoring identified hundreds of what they term UAIN (Unreliable AI-Generated News) sites — domains producing entirely machine-generated content across a range of political niches, monetized through programmatic advertising. These sites do not typically fabricate individual facts so much as fabricate context: real events described through a consistently misleading editorial frame, with no correction process, no bylines, and no stated methodology.

The “Pravda” network documented ahead of the 2024 EU elections was an early version of this model. Its 2025 successors were more numerous, more linguistically sophisticated, and more difficult to trace to origin actors.

Detection: Technical Methods

Technical deepfake detection operates across three layers: artifact analysis, metadata forensics, and provenance verification. No single method is sufficient. Used together, they dramatically increase detection accuracy.

Visual Artifact Analysis

Current AI image and video generation consistently produces characteristic artifacts: facial boundary inconsistencies at hairlines and ear margins; anatomically incorrect hands (extra fingers, fused digits, implausible joint angles); unnatural eye reflections that do not match scene lighting; and skin texture rendered at inconsistent resolution across the face. These artifacts are not always visible at normal viewing size but surface clearly under 200% zoom or forensic enhancement tools such as Forensically (29a.ch) or FotoForensics.

For video, temporal inconsistencies are equally revealing: facial geometry that does not track consistently through frames, unnatural blink rates (deepfakes trained on data with eyes-open tend to under-blink), and lighting that changes between cuts in ways inconsistent with a single physical environment.

Metadata and EXIF Analysis

Authentic photographs embed technical metadata (EXIF data) including camera model, lens specifications, capture timestamp, and — where location services were active — GPS coordinates. AI-generated images typically have stripped or absent EXIF data, or generic metadata inconsistent with the claimed device. Jeffrey’s Exif Viewer and ExifTool (command-line) both surface this information. A photo claimed to document a live event but showing no GPS data and a generic software-generated EXIF block warrants immediate verification.

Spectral Audio Analysis

Voice clones produce artifacts in specific frequency ranges — particularly above 8kHz, where natural voice harmonics become irregular in synthesis. Spectrogram tools that visualize audio frequency over time (Audacity, iZotope RX, Adobe Audition) make these anomalies visible. Pindrop’s enterprise-grade voice authentication system uses over 1,300 acoustic features to distinguish cloned from authentic voice — a level of sophistication not available to casual users, but accessible to newsrooms and election monitoring organizations.

Reverse Image and Video Search

The simplest and most consistently effective first step remains reverse image search: Google Images, TinEye, and Yandex Images can locate earlier appearances of recycled photographs within seconds. For video, InVID/WeVerify’s browser plugin allows frame-by-frame extraction and reverse search, making it possible to identify recycled footage even when it has been re-edited or re-framed. This technique does not detect newly generated synthetic content — it detects misrepresented authentic content, which remains the majority of circulating misinformation.

Detection: Journalistic Methods

Technical tools identify artifacts. Journalistic methods establish provenance — the documented chain of custody from creation to publication. Provenance verification is the approach recommended by the SIFT method, covered in detail in our deepfakes workshop.

Source Triangulation

No single source should be treated as sufficient for verifying synthetic media claims. Cross-referencing requires at minimum: the original source (who first published or shared the content?), an independent second source with direct access to the event or person depicted, and a third-party verification organization (Reuters Fact Check, AFP Fact Check, BBC Verify, or AP’s fact-checking desk). If two of these three cannot be confirmed, the content should be treated as unverified regardless of its apparent quality.

Context Verification

A technically undetectable deepfake can still be exposed through contextual inconsistency. MIT Media Lab’s research team — which ran five pre-registered randomized experiments with 2,215 participants on human detection of political speech deepfakes — found that text transcripts were often sufficient to identify implausibility, even when audio and video appeared realistic. Checking whether the depicted statements are consistent with the subject’s documented positions, communication style, and physical location at the claimed time is a fundamental verification step that requires no technical tools.

Domain and Publication History

For synthetic news portal content: WHOIS registration date, domain history via the Wayback Machine, and byline verification (do the listed journalists have verifiable professional histories outside the site?) are the three most reliable quick checks. Legitimate news organizations have publication histories that predate the story being shared. AI-generated news portals typically show domain registration within the past 12 months and bylines with no external verification trail.

The Regulatory Response: EU AI Act and C2PA

Two parallel frameworks are converging to create the first legally enforceable infrastructure for synthetic media disclosure: the EU AI Act’s transparency obligations and the Content Authenticity Initiative’s C2PA technical standard.

EU AI Act: Article 50 Transparency Obligations

Article 50 of the EU AI Act requires providers of AI systems that generate synthetic audio, image, video, or text content to mark outputs in a machine-readable format detectable as artificially generated or manipulated. Deployers must disclose when AI is used to create realistic synthetic content. Deepfakes must be labeled even when the content is lawful; only “evidently artistic, creative, satirical, or fictional” content qualifies for reduced disclosure requirements.

The transparency obligations under Article 50 become enforceable on August 2, 2026. The European Commission published the first draft of the Code of Practice on marking and labeling AI-generated content on December 17, 2025, establishing technical standards for watermarking and provenance metadata ahead of the legal enforcement date. Penalties for non-compliance reach €35 million or 7% of global annual revenue — a level intended to create compliance pressure on major platforms and AI providers.

The regulation’s territorial scope extends beyond EU borders: any company whose AI systems or synthetic content reach EU users must comply, regardless of where the company is based. This makes the EU AI Act, in effect, a global standard for the platforms that reach European audiences.

C2PA Content Credentials

The Coalition for Content Provenance and Authenticity (C2PA), which includes Adobe, Microsoft, Google, OpenAI, Meta, and major news organizations, has developed a technical standard that embeds cryptographically signed provenance metadata into image, audio, and video files. Content Credentials record who created the content, what tools were used, what edits were applied, and whether AI generation was involved — creating a tamper-evident chain of custody from creation to publication.

Adobe has integrated C2PA into Photoshop, Lightroom, and Firefly. YouTube added provenance-based labels in 2024. The US Department of Defense published guidance in January 2025 recommending C2PA adoption for government communications. OpenAI’s DALL-E 3 and Sora embed C2PA metadata by default.

A critical limitation: C2PA verifies authentic content — it does not detect deepfakes. The absence of C2PA metadata does not mean content is synthetic; content created on older devices or with tools that do not yet support the standard will also lack credentials. As the standard’s own documentation notes, missing credentials should prompt additional scrutiny, not automatic rejection. A complete C2PA implementation provides strong provenance evidence; its absence is an absence of evidence, not evidence of manipulation.

Privacy implications are also under active discussion. C2PA metadata can include timestamps, geolocation, and editing histories. The Fortune investigation published in September 2025 documented cases where C2PA metadata captured location data that content creators had not intended to share. The standard includes provisions for stripping sensitive metadata before publication, but implementation consistency across platforms remains uneven.

What to Expect in 2026

Three developments will shape the AI-generated misinformation landscape through 2026 and beyond.

Enforcement begins. The EU AI Act’s Article 50 provisions become legally enforceable in August 2026. This will create the first large-scale test of whether regulatory disclosure requirements can slow the production and distribution of synthetic media misinformation. Early compliance signals from major platforms are mixed: Adobe, Google, and OpenAI have made credible commitments; smaller AI providers and distribution platforms are less prepared.

Detection arms race continues. Generation and detection capabilities are evolving in parallel. MIT Media Lab’s research — and the broader literature — consistently shows that neither human observers nor automated detection tools are reliably accurate across all deepfake types. The combination of human judgment and machine-assisted analysis outperforms either alone. Newsrooms, election monitors, and platform trust-and-safety teams are investing in hybrid detection workflows; individual media consumers are largely left with the same tools they had in 2024.

Verification infrastructure is expanding, slowly. The SIFT method, C2PA adoption, and cross-platform fact-checking networks represent genuine progress. The verification capacity of the broader media literacy ecosystem — teachers, librarians, civic organizations — is growing through workshops and tools such as those covered in our deepfakes identification workshop. The gap between production capacity and verification capacity remains wide, but it is no longer widening as fast as it was in 2023.

For the foundational cases that established the current threat landscape — including the Zelensky surrender deepfake and the 2024 AI protest photo wave — the fact-check index and case database provide full documentation with methodology notes.