Media literacy is the capacity to find, evaluate, and use information effectively — and to recognize when information is designed to manipulate rather than inform. It is not a fixed skill but a practice, and the conditions it addresses are changing faster than at any previous point in media history.

What Media Literacy Actually Means

The term gets applied loosely, but a working definition matters. Media literacy encompasses three distinct competencies: the ability to recognize false or misleading content, the ability to verify claims through independent sources and tools, and the ability to make informed decisions about sharing information. Lacking any one of these creates a gap that bad actors consistently exploit.

The UNESCO definition, which informs most European media education frameworks, describes media literacy as “the ability to access, analyze, evaluate, and create media in a variety of forms.” The Council of Europe’s 2021 Media Literacy Framework adds a fourth dimension: the ability to engage with media content as a participant in democratic processes — not just a passive consumer. This site uses both definitions as reference points.

A critical clarification: media literacy is not the same as media skepticism. The goal is not to distrust all sources — it is to calibrate trust based on evidence. Blanket distrust of mainstream media is itself a documented product of disinformation campaigns, not evidence of critical thinking. Research from the Reuters Institute and the Harvard Kennedy School’s Shorenstein Center shows that populations with high levels of media skepticism are not more resistant to misinformation — they are differently susceptible to it, particularly from non-institutional sources.

Why 2025 Changed the Stakes

Three converging developments have made the practice more urgent in the past two years than at any point in the previous decade.

AI-Generated Synthetic Media at Scale

Until 2023, high-quality deepfake production required specialist hardware and significant technical skill. By late 2024, consumer tools built on Stable Diffusion, ElevenLabs voice synthesis, and multiple video generation platforms had reduced that barrier to near-zero. A convincing synthetic video of a public figure can now be produced in minutes on off-the-shelf hardware. The forensic gap — the time between a synthetic media item circulating and its definitive debunking — is measured in hours or days. That window is enough to shift public opinion on time-sensitive issues before correction reaches the same audience that saw the original.

Coordinated Inauthentic Behavior at Reduced Cost

Meta’s 2024 Adversarial Threat Report documented a significant increase in AI-assisted coordinated inauthentic behavior (according to Meta’s own reporting) (CIB) campaigns compared to 2023. The defining characteristic of current campaigns is not scale but specificity: LLM-generated content can be personalized to specific communities, languages, and emotional profiles at a cost that was prohibitive for human-written propaganda just three years ago. Campaigns that once required teams of writers now require a prompt and an API key.

Erosion of Institutional Trust

The Reuters Institute Digital News Report 2024 recorded average trust in news media at 40% across 47 surveyed countries — down from the pandemic-era peak. Declining institutional trust does not, by itself, make populations more susceptible to misinformation. But it removes one of the primary anchors people use to calibrate information: source reputation. When no source is trusted, all claims are treated as equally plausible, which is functionally the same as treating all claims as true — and which benefits those who produce disinformation at high volume.

The Three Core Competencies

1. Recognizing False and Misleading Content

Recognition is the entry point. It requires familiarity with the structural patterns that misleading content uses: emotional language in headlines, vague attribution, decontextualized statistics, absence of original sources, and urgency framing. These patterns are not proof of falsehood — they are signals that more investigation is warranted before trusting or sharing.

The Emotional Language in Headlines guide covers the specific linguistic patterns in detail, with examples from documented cases across the political spectrum. Recognition is a prerequisite for verification — you cannot verify content you have not first flagged as requiring scrutiny.

2. Verifying Claims

Verification is the core practice. It means tracing claims back to primary sources — original studies, official records, firsthand accounts — and evaluating the evidence on its own terms, not through intermediary summaries or social media framings. Most viral misinformation is traceable, given ten minutes and the right tools.

The Workshop section covers four practical verification methods in full: the SIFT framework, reverse image search, emotional language analysis, and deepfake detection. The Fact-Checking Tools guide lists the most reliable freely accessible tools for each verification task, with notes on what each tool does and does not cover.

3. Responsible Sharing

Misinformation spreads primarily through people who believe it to be true. The share decision is therefore as consequential as the creation decision. Responsible sharing means applying at least a basic verification check before amplifying a claim — particularly for claims that confirm existing beliefs, which research consistently shows are shared faster and with less scrutiny than claims that challenge them.

The practical standard is simple: if you cannot identify the original source of a claim and confirm it independently, do not share it. If the claim produces a strong emotional reaction, treat that as a reason to slow down, not as confirmation that it must be true.

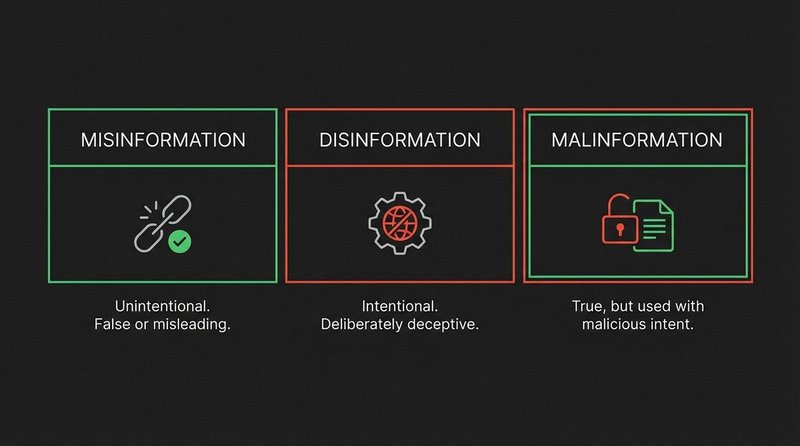

Key Concepts: Misinformation, Disinformation, Malinformation

These three terms are used interchangeably in public discourse, but they describe different phenomena with different causes and different remedies. The distinction matters for accurate diagnosis.

- Misinformation

- False or inaccurate information shared without intent to deceive. The person spreading it believes it to be true. Example: a user shares a health myth they encountered and genuinely believe. The appropriate response is accurate information presented accessibly, without condescension — people who spread misinformation are not the same as people who create it.

- Disinformation

- False information created and spread with deliberate intent to deceive. The producer knows the content is false and has a strategic goal — usually political, financial, or social. Examples include state-sponsored influence operations and fabricated quotes attributed to political figures. The appropriate response is exposure of the deception and its source, combined with prebunking — inoculating audiences against the specific technique before they encounter it at scale.

- Malinformation

- Accurate information used with intent to harm — typically by stripping context, mistiming release, or deploying private information to target individuals. Example: a factually accurate but deliberately timed leak of private communications designed to damage a candidate in the final days before an election. The information itself is true; the weaponization is the problem. The appropriate response is context restoration and source analysis, not fact-checking the content itself.

For a detailed analysis of how these three categories interact and overlap in documented cases, see Misinformation vs. Disinformation: What the Difference Actually Means.

Prebunking: Getting Ahead of Misinformation

Debunking — correcting false information after it has spread — is necessary but limited. Corrections rarely reach the same audience as the original claim, and repeated exposure to a false claim, even in the context of debunking, can paradoxically increase its memorability (the illusory truth effect, documented in research by Fazio et al., 2015, and replicated across multiple subsequent studies).

Prebunking addresses this limitation by inoculating people against misinformation techniques before they encounter specific false claims. Instead of correcting content, prebunking teaches the structural patterns that false content uses — so the technique is recognizable regardless of which specific claim it is applied to. Research from the University of Cambridge’s Social Decision-Making Lab and the jigsaw.google.com team has demonstrated that short prebunking interventions reduce susceptibility to misinformation techniques in controlled trials.

The Workshop section of this site is structured as a prebunking resource: it teaches detection techniques, not just specific debunked claims.

The Role of Platform Algorithms in Misinformation Spread

Individual critical thinking is necessary but not sufficient. Platform design shapes what information reaches people before any individual evaluation decision is made. Understanding how algorithmic amplification works is part of media literacy in 2025.

Content recommendation algorithms on major social platforms optimize primarily for engagement — not accuracy, not relevance, and not public benefit. Research from MIT’s Media Lab published in Science (Vosoughi, Roy, and Aral, 2018) found that false news spreads significantly faster and further than true news on social media, reaching six times as many people on average. A key driver is novelty: false information tends to be more surprising than accurate information, and surprise is a reliable engagement signal. The algorithm amplifies what generates reactions, which gives manipulative content a structural advantage.

This does not mean individuals are powerless. It means the verification habits described in this guide — pausing before sharing, tracing claims to primary sources, investigating source credibility — carry additional weight precisely because the default platform environment does not support them. Every share decision is also an algorithmic vote for the type of content that gets promoted next.

Resources in This Section

The media literacy hub contains guides, checklists, and analysis covering the full range of competencies described above. Core resources currently available:

- Misinformation vs. Disinformation — What the Difference Actually Means

- Fact-Checking Tools — The Best Free Resources for Independent Verification

- How to Teach Media Literacy to Teenagers — A Practical Guide for Educators

- The Anatomy of Viral Fake News — How False Stories Spread and Why

The Workshop section covers hands-on verification methods with step-by-step guides. The Fake News Database provides documented real-world case studies for each concept covered in this hub.