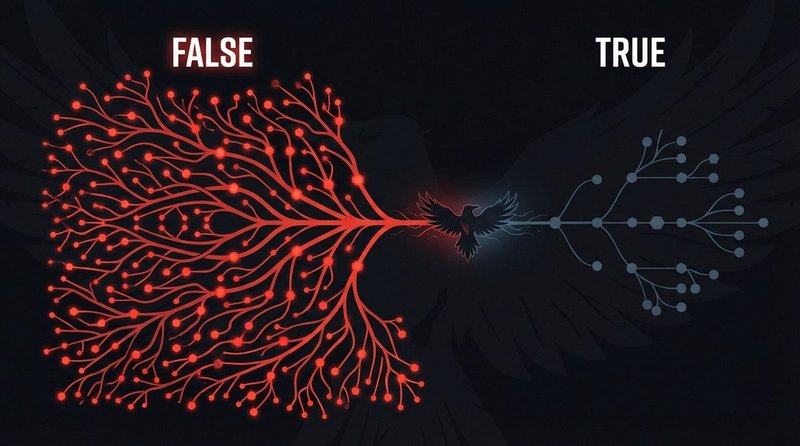

False news spreads faster, further, and deeper than true news on social media — not because people are irrational, but because false stories are engineered to exploit the same cognitive shortcuts that make us efficient information processors. Understanding the mechanism is the first step to resisting it.

The Data: What the MIT Study Found

The foundational empirical reference for this topic is a 2018 study by Soroush Vosoughi, Deb Roy, and Sinan Aral, published in Science (Vol. 359, Issue 6380). The researchers analyzed the spread of approximately 126,000 fact-checked stories shared on Twitter between 2006 and 2017, by roughly 3 million people, more than 4.5 million times.

The findings were unambiguous. False news stories reached 1,500 people approximately six times faster than true stories. They spread to unique users more broadly (more people saw false stories than true ones) and more deeply (false stories penetrated further into social networks through longer cascade chains). The effect was strongest for political misinformation. And crucially: the researchers controlled for bots. Humans, not automated accounts, were the primary vector for false news spread. Bots spread true and false stories at roughly the same rate; the human amplification differential was the significant finding.

The study’s explanation for the asymmetry: false news is more novel (it contains information audiences have not seen before) and more emotionally engaging. Stories flagged as false by fact-checkers generated significantly higher responses in the categories of surprise, fear, and disgust. True stories generated more sadness and anticipation — lower-arousal emotional states that produce less sharing behavior.

Emotional Triggers: The Architecture of Viral False Content

Viral misinformation reliably exploits three categories of emotional response. Knowing them does not make you immune — the responses are physiological — but it creates a brief window of deliberate evaluation before you act on the impulse to share.

Outrage

Moral outrage is the most reliable predictor of social media sharing behavior. A 2017 study by Brady et al. in PNAS found that each moral-emotional word added to a tweet increased retweet rates by approximately 20%. Viral fake news stories systematically use outrage framing — they present events as injustices, betrayals, or threats to in-group values. The content does not need to be entirely false to trigger outrage; selective framing of real events, stripped of context, works just as well.

Fear

Threat-based misinformation activates the amygdala before the prefrontal cortex can apply analytical reasoning — a well-documented phenomenon in cognitive neuroscience. Health misinformation (“This food/vaccine/substance is killing you”), crime misinformation (“Crime is surging in your neighborhood”), and identity threat narratives (“They are replacing your culture”) all exploit fear responses. The content spreads rapidly because sharing it feels protective — you are warning your network — which creates a prosocial motivation for amplifying false information.

Schadenfreude and Tribal Satisfaction

Stories that validate negative beliefs about an out-group — political opponents, rival nations, ideological enemies — trigger reward circuitry rather than threat responses. Sharing them feels good. Research by Pennycook & Rand (2019) in Social Psychological and Personality Science found that “partisan congruence” (content that confirmed existing political beliefs) was the strongest predictor of sharing intent — stronger than the perceived accuracy of the content. People share content because it feels true to their worldview, not necessarily because they believe it is factually accurate.

Platform Algorithms and Engagement Optimization

Platform architecture does not create misinformation, but it is a significant amplification mechanism. The core design principle of engagement-optimized recommendation systems — maximize time on platform — structurally rewards emotionally activating content, regardless of its accuracy.

A 2021 internal Facebook study, reported by the Wall Street Journal based on leaked documents, found that Facebook’s own researchers had identified that its algorithm change in 2018 (shifting toward “meaningful social interactions” — content that generated comments and reactions rather than passive views) had the effect of amplifying outrage-inducing content, including misinformation. The researchers proposed fixes; the fixes were not implemented at scale due to concerns about reducing overall engagement.

The mechanism is not unique to Facebook. YouTube’s recommendation algorithm, as documented in a 2020 audit by Ribeiro et al., consistently recommended progressively more extreme content once a user began watching content in certain political or conspiratorial categories — a “rabbit hole” effect driven by watch-time optimization. Twitter/X’s algorithmic timeline has been shown by its own researchers to amplify right-leaning political content more than left-leaning content in several countries, independent of engagement differences. The platform’s 2021 internal study published this finding voluntarily.

The structural implication: platforms built to maximize engagement will systematically amplify content that triggers strong emotional responses — which is, by design, what false and misleading news is engineered to do.

Timing: When Fake News Is Most Dangerous

False information does not spread uniformly across time. Three specific contexts create conditions where misinformation spreads fastest and corrections are least effective.

Breaking News Windows

The first 30–60 minutes after a major event is when verified information is scarcest and audience demand is highest. Misinformation fills the gap. Research by the Reuters Institute for the Study of Journalism consistently finds that false claims introduced during breaking news windows are harder to correct than those introduced after authoritative reporting is available, because the false version often becomes the mental anchor audiences use to evaluate subsequent corrections.

Elections and Political Campaigns

Electoral periods show consistent spikes in misinformation volume and velocity across every studied democratic context. The EU vs Disinfo database documents election-period amplification across EU member states in every election cycle since 2014. The combination of high political stakes, strong tribal motivation to share favorable content, and fragmented media environments creates optimal conditions for false information to reach large audiences before fact-checkers can respond.

Public Health Crises

The WHO coined the term “infodemic” in February 2020 to describe the parallel epidemic of false and misleading COVID-19 health information. Its joint study with partner organizations found that by March 2020, health misinformation was traveling faster than official guidance across every major social platform — a direct consequence of the same mechanisms described above, operating in a high-fear, high-uncertainty environment.

Super-Spreader Accounts and Network Effects

Viral spread is not uniform across social networks. A small number of highly connected, high-engagement accounts — often described as “super-spreaders” — account for a disproportionate share of misinformation amplification.

Analysis by the NewsGuard Misinformation Monitor and independent researchers studying the 2020 U.S. election consistently found that a small number of accounts — often with millions of followers, often including prominent political figures — were responsible for the majority of high-reach misinformation episodes. The pattern is structurally similar to what epidemiologists call “superspreader events” in disease transmission: most people infect zero or one person; a small number of people, in the right conditions, infect hundreds.

Platform-level network analysis by Graphika and the DFRLab has documented coordinated amplification networks in which accounts with no organic relationship artificially boost each other’s content — compressing the time between initial publication and mass exposure. This makes the breaking-news window even shorter and reduces the time available for verification-based interventions.

Case Study: The Anatomy of a Real Viral Fake News Event

In November 2016, a false claim circulated that a Washington D.C. pizzeria called Comet Ping Pong was the center of a child trafficking network run by senior Democratic Party officials. The claim — which became known as “Pizzagate” — had no evidentiary basis and was investigated and dismissed by the Washington D.C. Metropolitan Police Department. Nevertheless, it spread to millions of people within days and was directly cited by a man who fired a rifle inside the restaurant on December 4, 2016, injuring no one but causing significant property damage.

The Pizzagate case demonstrates every mechanism described in this article operating simultaneously:

- Emotional trigger: Child endangerment is among the highest-arousal moral concerns — triggering both outrage and fear responses.

- Timing: It emerged immediately after the 2016 U.S. election, in a peak-engagement political moment with high partisan motivation to share damaging information about the opposing party.

- Super-spreader amplification: The claim was promoted by accounts with large audiences on Twitter and YouTube, including some with hundreds of thousands of followers, before platform enforcement mechanisms were applied.

- Algorithm optimization: YouTube’s recommendation algorithm, according to later analysis, directed users from mainstream election content into Pizzagate videos through predictable content escalation paths.

- Correction asymmetry: By the time the Washington Post, New York Times, and multiple fact-checkers had published full debunks, the false version had already reached more people than any subsequent correction would reach. The Vosoughi et al. finding about the six-times faster spread of false news is directly illustrated here.

A documented analysis of the Pizzagate information operation, including spread mapping, is maintained by the European Digital Media Observatory (EDMO) as a reference case study in coordinated influence operations.

Understanding these mechanisms at the structural level is the core prerequisite for evaluating content before you share it. The Misinformation vs. Disinformation guide → explains how intent maps onto these viral mechanics. The Emotional Language in Headlines workshop → provides hands-on practice identifying trigger framing in real headlines. Documented viral cases with full spread analysis are available in the Fake News Database →. Return to the Media Literacy Hub → for the full learning path.