Misinformation and disinformation are not synonyms. The difference — intent — determines which countermeasures work and which ones fail. Treating all false information as deliberate deception leads to the wrong interventions; ignoring malicious intent lets coordinated campaigns operate unchallenged.

Three Categories, One Framework

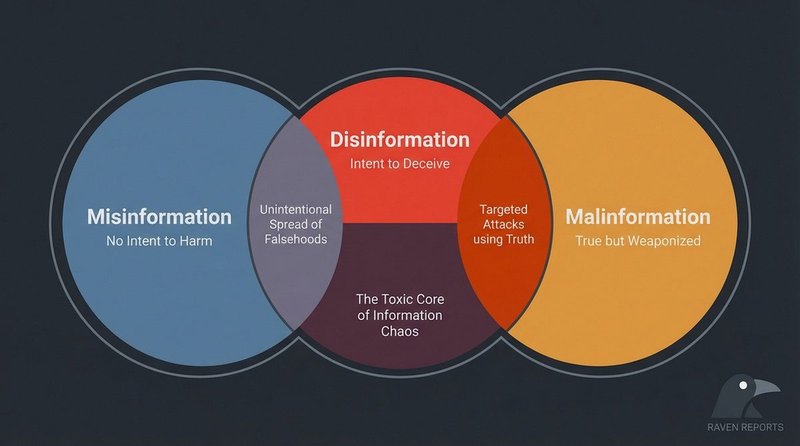

The most cited framework for understanding false information comes from researcher Claire Wardle and her colleagues at First Draft, whose 2017 report Information Disorder — later developed into a full model for the Council of Europe — distinguishes three distinct phenomena based on two axes: whether the content is false, and whether the creator intends harm.

- Misinformation

- False or inaccurate information shared without intent to harm. The person spreading it believes it to be true. Examples: a parent sharing a debunked home remedy on a WhatsApp family group, or a journalist citing an unverified statistic in good faith. The content is wrong; the motive is not malicious.

- Disinformation

- False or misleading information created and spread with deliberate intent to deceive or harm. The source knows the information is false — or is recklessly indifferent to its accuracy — and distributes it anyway to achieve a goal: political manipulation, financial gain, reputational damage, or the destabilization of public trust. Fabricated quotes attributed to politicians, coordinated network campaigns to discredit journalists, and state-sponsored election interference all fall here.

- Malinformation

- Information that is true, but weaponized to cause harm. This category is the most counterintuitive: no false claim is made, but the selective release of accurate private information — a hacked email, a leaked photograph, real health records — is deployed to damage a specific target. The EU’s Code of Practice on Disinformation and the Council of Europe’s guidelines both treat malinformation as a distinct category requiring different regulatory responses than the other two.

UNESCO’s media and information literacy curriculum adopts a compatible taxonomy, emphasizing that the umbrella term “fake news” is analytically insufficient and potentially misleading — it conflates categories that require fundamentally different responses.

Why Intent Changes Everything

Intent is not just an ethical question — it is a practical one that determines which interventions are effective.

When false information spreads because people believe it is true (misinformation), the most effective responses are educational: debunking, prebunking, and improving media literacy. People who shared wrong information in good faith will generally correct themselves when given accurate information and a low-friction way to do so. Research by Pennycook et al. (2020) in Psychological Science found that simply asking people to consider accuracy before sharing reduced the spread of misinformation in simulated studies.

When false information is disinformation — deliberately manufactured — educational responses alone are insufficient. The source will not update its behavior based on fact-checks. Effective countermeasures include platform-level enforcement, legal frameworks (such as the EU’s Digital Services Act), attribution research to expose coordinated inauthentic behavior, and strategic communication that preemptively inoculates audiences. The EU vs Disinfo project maintains an ongoing database of documented Kremlin disinformation cases — over 16,000 entries as of early 2026 — precisely because naming the source is a necessary part of the response.

Malinformation calls for yet another toolkit: primarily legal mechanisms around privacy, data protection, and platform transparency — not fact-checking, since the underlying facts are accurate.

Real Examples Across All Three Categories

Misinformation in Practice

During the early months of the COVID-19 pandemic, claims that drinking hot liquids could kill the SARS-CoV-2 virus circulated widely on messaging apps. Most people sharing these messages genuinely believed they were being helpful. The WHO’s mythbusters page documented dozens of similar cases where well-intentioned sharing accelerated the spread of health misinformation. No coordinated campaign was necessary; anxious people in a high-uncertainty environment amplified plausible-sounding but false information organically.

Disinformation in Practice

The U.S. Intelligence Community’s 2017 assessment of Russian interference in the 2016 U.S. election documented a coordinated disinformation campaign involving fabricated content, fake personas, and algorithmic amplification. Operatives at the Internet Research Agency created thousands of social media accounts to pose as authentic American voices on divisive political issues — a textbook disinformation operation where the creators knew the content was false and the intent was explicit political manipulation.

Malinformation in Practice

The 2016 release of John Podesta’s private emails — obtained through a targeted phishing attack on his Gmail account and published via WikiLeaks — is a documented case of malinformation. The emails were real. No content was fabricated. But their selective release, timed to damage a political campaign, constitutes weaponized true information by the definition established in the Wardle/First Draft framework. The Council on Foreign Relations’ cyber operations tracker classifies this operation under information operations.

The Gray Areas

The three-category model is a useful analytical tool, not an airtight taxonomy. Real cases frequently occupy overlapping territory.

Consider a viral social media post that contains a genuine statistic presented without context, in a way the poster knows will lead audiences to a false conclusion. The data point is true; the framing is deliberately deceptive. This sits between misinformation (no false claim) and disinformation (deliberate deception). Wardle’s framework accounts for this with a spectrum of “seven types of mis- and disinformation” ranging from satire and parody through to fabricated content — acknowledging that intent and falsity are continuous variables, not binary switches.

A second common gray area is partisan amplification: when a disinformation narrative, originally created by a coordinated actor, gets picked up and shared by ordinary citizens who now genuinely believe it. The initial creation was disinformation; the subsequent spread is misinformation. Attribution research by organizations like Graphika and the Digital Forensic Research Lab (DFRLab) focuses on identifying the original coordinated source, precisely because the downstream organic sharing is not the primary problem.

A third complexity is contested facts: claims in areas where genuine scientific uncertainty exists, or where expert opinion is legitimately divided. Classifying a contested empirical claim as disinformation requires establishing both that it is false and that the source knew this. This distinction matters enormously for free expression: overreach in labeling contested speech as disinformation is itself a documented abuse vector, used by authoritarian governments to suppress legitimate dissent.

Implications for Countermeasures

Understanding the category of false information you are dealing with is a prerequisite for choosing an effective response. The following table maps intervention types to information disorder categories:

- Media literacy education — primarily addresses misinformation, where the audience’s ability to evaluate sources is the key variable.

- Prebunking / inoculation theory — effective across misinformation and early-stage disinformation; research by Lewandowsky & van der Linden (2021) shows that exposing audiences to weakened forms of manipulation techniques builds resistance.

- Platform enforcement (content removal, demotion, labeling) — targets disinformation at the distribution layer; effectiveness depends on the speed of enforcement relative to viral spread.

- Attribution and exposure — targets disinformation at the source; naming coordinated actors raises the cost of running influence operations.

- Legal and regulatory tools — relevant for both disinformation (EU DSA, national electoral integrity laws) and malinformation (data protection law, privacy torts).

- Corrections and debunking — most effective for misinformation; less effective for disinformation, where the source will not update, and where corrections can paradoxically amplify the original false claim through the “liar’s dividend” — the phenomenon where accusations of disinformation are themselves deployed to discredit accurate reporting.

For a structured method to evaluate any specific claim before sharing, see our guide to the SIFT Method →. For real-world cases sorted by category and pattern, the Fake News Database → provides documented examples with full methodology notes. The full Media Literacy Hub → connects all concepts covered here into a structured learning path.