Spotting fake news is a learnable skill, not a personality trait. This workshop section breaks down four practical methods — each one applicable without specialist tools, institutional access, or prior training.

What This Workshop Covers

The methods documented here are not theoretical frameworks. They are verification workflows used by professional fact-checkers, journalists, and digital investigators — adapted for use by anyone with internet access and a critical mindset.

Each method is documented in a dedicated guide with step-by-step instructions, real examples from the Fake News Database, and a checklist for quick reference. This page introduces all four methods. Follow the links to the full guides to apply them in practice.

The workshop is free and requires no registration. All guides are written for independent use — you do not need to work through them in order. Choose the method most relevant to the type of content you want to evaluate.

After completing this workshop, you will be able to:

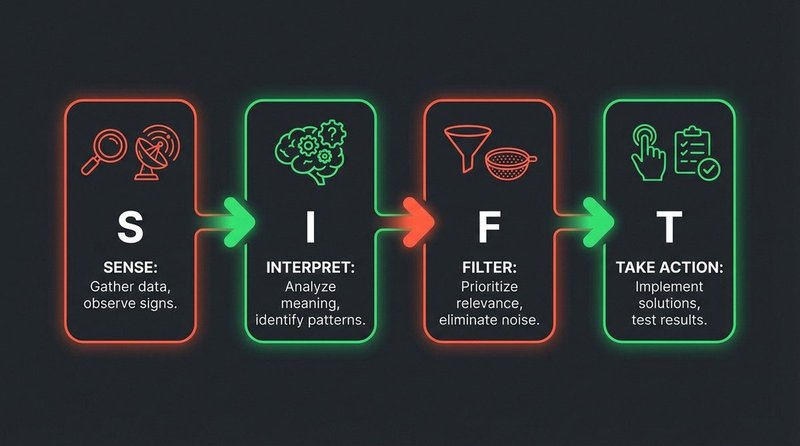

- Apply the SIFT method to evaluate any piece of online information before sharing

- Use reverse image search to verify or disprove visual claims

- Recognize the emotional language patterns that signal manipulative content

- Identify key technical and behavioral markers of AI-generated video (deepfakes)

Method 1 — SIFT: A Four-Step Verification Framework

SIFT is the most widely adopted information verification method in media literacy education. It was developed by Mike Caulfield at Washington State University and is now used in K-12 curricula, university programs, and professional journalism training across the US and Europe.

The method addresses a specific problem: most people evaluate information based on how it feels rather than on evidence. SIFT interrupts that default by introducing structured friction — four deliberate steps that happen before any sharing or opinion formation.

Method 2 — Reverse Image Search

Repurposed images are one of the most common vectors in fake news. A photo from a 2013 flood gets shared as evidence of a 2024 disaster. A protest image from one country gets captioned as if from another. Reverse image search breaks this tactic in under two minutes.

The core process: right-click any image online and select “Search image” (Chrome/Edge) or upload it directly to Google Images, TinEye, or Yandex Images. The search returns all indexed instances of that image — with dates, sources, and original captions. An image that first appeared years before the claimed event date is almost certainly being misrepresented.

Key refinements covered in the full guide: searching by screenshot when direct upload fails, using Yandex for broader coverage (particularly effective for Eastern European and Russian-language sources), and combining reverse image search with EXIF metadata inspection for images that have not been widely indexed before. The guide also covers how to handle cropped or color-adjusted copies, which can evade basic reverse search.

Method 3 — Recognizing Emotional Language in Headlines

Emotionally charged language in headlines is not evidence of fake news by itself — real events can and do generate strong reactions. But a consistent cluster of emotional manipulation techniques is a reliable warning sign that the content prioritizes reaction over accuracy.

The patterns to watch for: absolute language (“NEVER”, “ALWAYS”, “DESTROYS”, “PROVES ONCE AND FOR ALL”), vague but alarming attribution (“experts warn”, “sources confirm” without names or institutions), urgency framing (“before it’s deleted”, “share before they take this down”), and identity threat framing (“what they’re doing to your children/community/country”). These patterns appear in both low-quality clickbait and sophisticated state-sponsored disinformation, which is why recognizing them is a transferable skill across content types.

None of these patterns proves a claim is false. They indicate that the content is engineered to produce a reaction before the reader evaluates the evidence. The SIFT method’s Stop step is the direct behavioral counter to this technique — slowing the share impulse long enough to apply even minimal scrutiny.

Full Guide: Emotional Language in Headlines — patterns and examples →

Method 4 — Identifying Deepfakes

AI-generated synthetic video (deepfakes) has become technically accessible enough that individuals with consumer hardware can now produce convincing face-swaps and voice clones. Detection is increasingly difficult, but not impossible — current deepfake generation methods leave consistent artifact patterns that remain identifiable with focused analysis.

The most reliable current visual indicators: unnatural blinking patterns or absence of blinking, inconsistent lighting between the subject’s face and the surrounding environment, blurring or warping at hairline edges and ear boundaries, inconsistent audio-to-lip synchronization visible under frame-by-frame review, and unnatural skin texture smoothing (a byproduct of the neural rendering process). No single indicator is conclusive. A combination of three or more warrants serious scrutiny.

Technical tools covered in the full guide include Deepware Scanner and the FakeCatcher method developed by Intel Research. Behavioral and provenance analysis is equally important: the questions of who published this video, through which channel, with what claimed origin story, and whether the context matches the content are often faster to resolve than technical artifact analysis.

Full Deepfake Detection Guide — tools and step-by-step analysis →

Who This Workshop Is For

Students

Secondary school and university students navigating information-dense environments. Each method is designed to be applied quickly — a SIFT check takes two minutes, a reverse image search takes one. No specialist tools or accounts required. The guides use real cases from the database as worked examples rather than hypothetical scenarios.

Teachers and Educators

Each guide includes worked examples and downloadable checklists suitable for classroom use. The SIFT and emotional language guides map directly to media literacy competency standards in German, Austrian, and EU curricula frameworks. Contact the site for tailored workshop materials or session planning support.

Journalists and Researchers

The reverse image search and deepfake detection guides go into more technical depth and reference professional-grade tools alongside the free options. The Fake News Database provides documented case studies organized by method category, useful for research or as teaching material in journalism programs.

Common Misinformation Patterns

These patterns appear repeatedly in fake news content. Learning to recognise them is the first step toward media literacy.

Ready to practice?

Browse real documented cases in our database and test your ability to spot the patterns.

Open the Database