Deepfakes are AI-generated or AI-manipulated video and audio that make a person appear to say or do something they never said or did. Visual artifact analysis catches lower-quality deepfakes, but provenance analysis — tracing where a video first appeared and who published it — is the more reliable method for the majority of manipulated media circulating online.

What Deepfakes Are and How Common They Are

The term “deepfake” refers specifically to media generated or manipulated using deep learning — neural networks trained on large datasets of real video or audio to produce synthetic output that resembles a specific person. The technology has two primary categories: face-swap deepfakes (replacing one person’s face with another’s in existing video) and full synthesis (generating a person speaking from scratch, typically for audio clones).

The most documented political deepfake is the March 2022 surrender video of Ukrainian President Volodymyr Zelensky. The video appeared on Ukrainian news website Ukraine 24 after a hack, circulated widely on social media, and was taken down by Meta, YouTube, and Twitter/X the same day. Zelensky responded within hours on Telegram, confirming the video was fabricated. UC Berkeley computer vision researcher Hany Farid analyzed the video publicly, identifying a low-resolution encoding strategy used to obscure facial distortions, an unnatural head movement pattern, and lip-sync that did not fully match Ukrainian phonetics. The full documented case is in the Fake Off database →.

The Zelensky video was unusual in its political targeting. The majority of deepfakes in circulation are non-consensual intimate images (estimated at over 96% of all deepfake content by volume, according to Sensity AI’s 2019 research) and synthetic audio clones used for financial fraud. High-profile political deepfakes like the Zelensky video are relatively rare but disproportionately reported.

See also the AI and fake news: 2025 roundup → for documented cases from the past year.

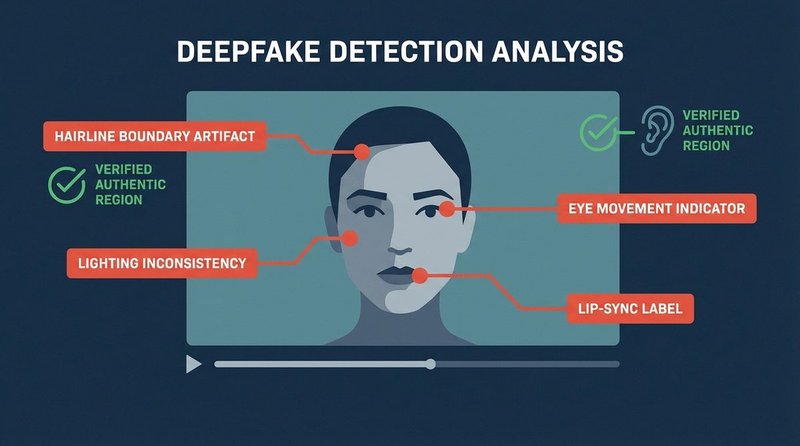

Visual Indicators: What to Look For

Visual artifact analysis works best on lower-quality deepfakes. High-quality synthetic video produced by state-level actors or professional operations may show none of these artifacts. Apply visual analysis as a first check — it can quickly confirm a low-quality fake — but do not rely on absence of artifacts as confirmation of authenticity.

Eye Blinking and Movement

Early-generation deepfake models (pre-2020) struggled with realistic blinking because training datasets contained fewer closed-eye frames than open-eye frames. This produced videos where the subject blinked rarely or not at all. Current models have largely corrected this, but rapid lateral eye movement and unnatural saccades (the micro-jumps the eyes make when scanning) remain difficult to synthesize convincingly. Watch a segment at 0.25x speed and observe whether eye movement looks physically plausible for a person speaking conversationally.

Hairline and Ear Boundaries

Face-swap deepfakes blend a synthetic face onto an existing video, and the boundary region — particularly the hairline, ears, and the edge of the jaw — is where the blend typically fails. Look for: soft or blurry edges where hair meets skin, inconsistent lighting between the face and the hair, geometric distortions when the subject turns their head, and earlobes that seem to stretch or deform slightly during movement. These artifacts are most visible in high-resolution stills extracted from the video rather than in playback.

Lighting and Shadow Consistency

The synthetic face in a face-swap deepfake is rendered with lighting derived from the source footage, not from the lighting conditions in the target video. When a person moves their head in natural light, the highlight and shadow pattern on their face moves consistently with the light source. In a deepfake, this consistency can break: the face may appear to have a different ambient light source than the background and neck, or shadows may not track correctly through head movement.

Lip-Sync and Phonetic Matching

Lip-sync accuracy is one of the areas where deepfake generation has improved most rapidly. However, phoneme-to-mouth shape mapping — the specific lip positions required for each sound in a particular language — remains an area of imperfect synthesis, particularly for languages other than English. The Zelensky video was partially identified through this mechanism: Ukrainian phonetics require specific bilabial movements that the synthetic model did not fully reproduce. For audio-only deepfakes (voice clones), listen for: unnatural pauses between words, prosody that does not match the sentence structure, and consistent noise floor that does not vary as a real recording would.

Skin Texture and High-Frequency Detail

Neural networks synthesize skin as a smooth surface. Real human skin has pores, fine lines, and irregular texture that varies across different regions of the face. In video, skin texture is rendered dynamically — it changes with movement and lighting. Synthetic skin tends to be too smooth in unflattering lighting and may show a plastic-like reflectivity. This is most visible around the nose, forehead, and cheeks when the subject is well-lit.

Detection Tools: What They Do and What They Do Not

Automated detection tools should be used as supporting evidence, not as definitive verdicts. Detection accuracy varies significantly depending on the generation method used to create the deepfake, and tools trained on older deepfake datasets may miss newer generation techniques.

Deepware Scanner

Deepware Scanner (free, browser-based) analyzes uploaded video files for deepfake signatures using deep learning models. It returns a probability score rather than a binary verdict. Scores above 80% indicate likely synthetic content; scores between 40–80% are inconclusive; below 40% indicates likely authentic. Use Deepware for a rapid first assessment, not as a final determination.

Intel FakeCatcher

Intel’s FakeCatcher, developed in collaboration with researchers from the State University of New York at Binghamton and announced in November 2022, uses a fundamentally different detection approach. Rather than looking for visual artifacts, it analyzes photoplethysmography (PPG) signals — the subtle color changes in skin caused by blood flow as the heart pumps. Real human faces show coherent PPG signals across the face; synthetic faces do not replicate this biological signal realistically. Intel claims a 96% detection accuracy rate in controlled testing. FakeCatcher is primarily available for institutional and platform use; it is not currently a consumer tool, but its methodology represents the direction automated detection is heading.

Sensity AI

Sensity AI provides enterprise deepfake detection for newsrooms and platforms, with a research division that publishes annual reports on deepfake volume and distribution patterns. Their detection API is not publicly free, but their published research is an authoritative source for understanding the current scale of synthetic media.

What Tools Cannot Do

No automated detection tool is reliable against state-level adversarial deepfakes — synthetic media specifically optimized to defeat known detection methods. The existence of adversarial deepfakes is well-documented in academic literature. This means automated tools can confirm a low-to-medium quality deepfake, but a negative result (“likely authentic”) does not guarantee authenticity when the stakes are high. Combine tool output with visual analysis and, most importantly, provenance investigation.

Provenance Analysis: The Most Reliable Method

For the majority of deepfakes circulating on social media, provenance analysis — tracing the origin and distribution chain of the content — is more reliable than visual or automated analysis. A video that circulates first on known state-linked influence operation accounts, that appears without a verifiable event documentation chain, and that no credible news organization can corroborate should be treated as unverified regardless of what it shows.

Provenance analysis for video follows the same principles as reverse image search for photographs. Apply the Reverse Image Search workflow → using the InVID/WeVerify plugin to extract keyframes and search each one independently. For full videos:

- Find the first appearance. Who published this video first? A search on the platform where you encountered it, combined with a keyframe reverse search, often surfaces the original upload. A video published first by anonymous accounts with no connection to the claimed event is a red flag.

- Check whether any credible outlet has independently verified the event depicted. If a video claims to show a political figure making a statement, that statement should be verifiable through official channels — press conferences, official social media, wire service reporting. Absence of any corroboration is significant.

- Check whether the claimed speaker has issued a statement. Most political deepfakes are repudiated quickly by the person depicted. Search for responses from the depicted individual’s verified accounts and official communications.

- Examine the distribution network. Accounts that first spread a suspected deepfake often have histories of spreading other suspected synthetic or manipulated content. Tools like the Botometer from Indiana University can help assess whether amplifying accounts show automated behavior patterns.

AI Voice Clones: The Audio-Only Deepfake

Audio deepfakes — voice clones that replicate a specific person’s voice using AI — are increasingly used in targeted financial fraud, non-consensual impersonation, and political disinformation. They do not require video and are significantly cheaper and faster to produce than video deepfakes.

A documented case type: a voice clone of a political figure or CEO is used to instruct employees, relatives, or the public to take an action — transfer funds, change a security password, or support a false narrative. The cloning technology requires as little as three seconds of training audio from any publicly available recording, according to research from Microsoft’s VALL-E model and similar systems published in 2023.

Audio verification indicators include: an absence of natural background variation (real recordings have a noise floor that shifts; synthetic audio is often unnaturally uniform), prosody mismatches (the rhythm and stress patterns of speech not matching the speaker’s documented style), and the absence of breathing sounds or mouth noise between words. For high-stakes audio, comparison against authenticated recordings from the same time period is the most reliable check.

Deepfake Identification Quick-Reference

- Visual check (30 seconds): Look for hairline blending artifacts, lighting inconsistency between face and background, unnatural eye movement, and oversmoothened skin texture at 0.25x speed.

- Lip-sync check: Does the mouth shape match the sounds being spoken? Pay particular attention if the speech is in a language other than English.

- Run Deepware Scanner for an automated probability assessment. Use as supporting evidence only, not as a final verdict.

- Provenance first: Who published the video first? Can the depicted event be independently corroborated through official channels and credible newsrooms?

- Check for official repudiation: Has the depicted person responded on verified official accounts?

- For audio-only clips: Listen for uniform noise floor, prosody mismatches, and absence of natural breathing or mouth sounds.

- Remember: Absence of visual artifacts does not confirm authenticity for high-quality deepfakes. Provenance analysis is the method that scales.